Editor’s Note: This post is republished with permission from Trust Insights, a company that helps marketers solve/achieve issues with collecting data and measuring their digital marketing efforts.

Click the corresponding links to read Part 1, Part 2, Part 3, Part 5, and the Post-Mortem of this series.

Introduction

The recurring perception that artificial intelligence, AI, is somehow magical and can create something from nothing leads many projects astray. That’s part of the reason that the 2019 Price Waterhouse CEO Survey shows fewer than half of US companies are embarking on strategic AI initiatives—the risk of failure is substantial. In this series, we’re examining the most common ways AI projects will fail for companies in the beginning of your AI journey. Be on the lookout for these failures—and ways to remediate or prevent them—in your own AI initiatives.

Part 4: Modeling-Related AI Failures

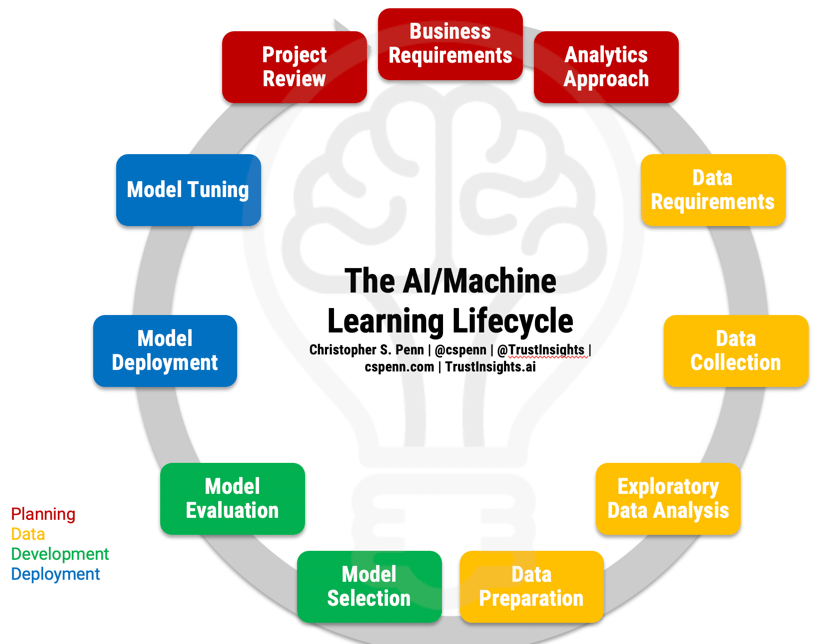

Before we can discuss failures, let’s refresh our memories of what the AI project lifecycle is to understand what should be happening.

Grab the full-page PDF of the lifecycle from our Instant Insight on the topic and follow along.

Now that we’ve identified the major problems in overall strategy, let’s turn our eyes to the major problems we are likely to encounter in the modeling portion of the lifecycle:

- Model Selection

- Model Evaluation

Model Selection

Choosing a model in machine learning and AI revolves around two key decisions:

- What kind of data are we dealing with?

- What are the models for that kind of data that provide the best balance of competing objectives?

A key mistake many novice machine learning companies and practitioners make is just picking the models that they know, or picking models based solely on one criteria—known model performance. You’ll often read about specific models and techniques being used to win major competitions; for example, XGBoost has been the darling of Kaggle competitions for years. The antidote is to start is not with what’s popular, but with what kind of data you have.

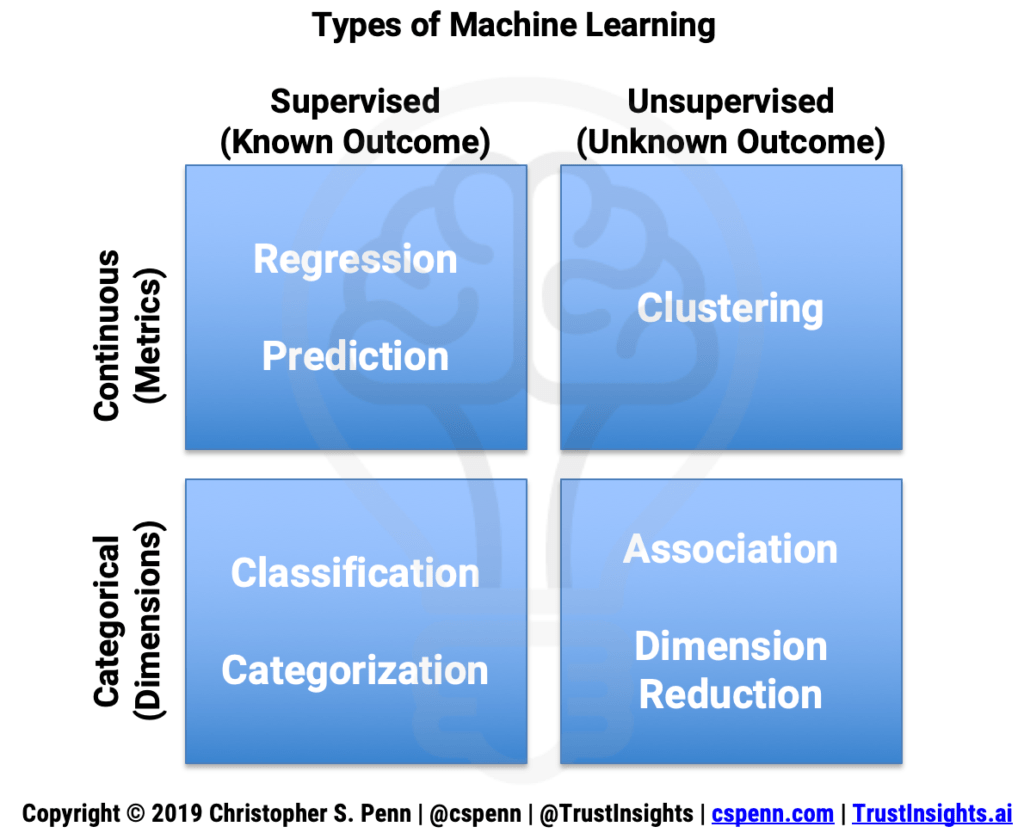

Consider the machine learning problem matrix:

We face four different kinds of problems:

- Supervised continuous – we know what outcome we’re after, and the data is numeric

- Unsupervised continuous – we don’t know what outcome we’re after, and the data is numeric

- Supervised categorical – we know what outcome we’re after, and the data is non-numeric

- Unsupervised categorical – we don’t know what outcome we’re after, and the data is non-numeric

What kinds of problems might we solve in marketing with these?

- Supervised continuous – what channels drive conversions on our website?

- Unsupervised continuous – which SEO data points should we pay attention to?

- Supervised categorical – what’s the sentiment of this pile of Instagram posts?

- Unsupervised categorical – what are the most common themes in this collection of articles?

Inside each of these categories, we have an arsenal of techniques:

- Supervised continuous

- Regression: ordinal, poisson, random forest, boosted tree, linear, deep learning

- Unsupervised continuous

- Clustering: K-Means, covariance, deep learning

- Supervised categorical

- Classification: decision tree, logistic regression, neural network, KNN, naive Bayes, deep learning

- Unsupervised categorical

- Dimension reduction: PCA, LDA, CCA, TSNE, deep learning

Each technique comes with advantages and tradeoffs, which leads to the second major problem and point of failure: choosing a model based only on performance. The reality of production machine learning is that we have to balance not only performance of the model, but also computational cost. Some machine learning algorithms deliver best-in-class model performance, but punishing computational requirements that make them impractical for use in production, especially in real-time environments.

For example, have you noticed that sentiment analysis, especially in social media monitoring tools, continues to be uniformly bad? It’s not because sentiment analysis software is bad. It’s because the compute cost of some of the best sentiment analysis techniques is far too high for a software-as-a-service (SaaS) company. We’ve come to expect instant results when we click or tap a button on our devices, and sentiment analysis done well is anything but instant. As a result, SaaS companies still rely on older, less effective sentiment analysis models that deliver poor results, but are instant from the user’s perspective.

What’s the right balance your company needs to provide in its machine learning projects? The answer is dependent on costs—how much compute are you willing to buy? Ultimately, machine learning and AI should be leading to greater cost efficiency, so be sure to choose models that will deliver better results at lower costs.

This leads to a final mistake on model selection: the landscape is changing so quickly that if you and your team aren’t keeping current, you could be missing out on massive cost savings and improved results. Going back to the sentiment analysis example, in the world of natural language processing (NLP), the oldest style of NLP used primitive approaches like bag-of-words, which is what most sentiment analysis is in today’s production tools. In 2016, vectorization took over the crown of performance, and libraries like Facebook’s FastText became the reigning champions of performance while not substantially increasing compute costs. In 2018, pre-trained models like BERT, ELMO, Grover, and GPT-2 took the crown from vectorization, and the current gold standard in NLP is the pre-train/fine-tune approach used by GPT-2 and XLNet. If you’re not keeping pace with the latest advances, you may be missing massive opportunities to improve your quality and your efficiency.

Model Evaluation

Once we’ve selected a model, we need to evaluate its performance. There are two key mistakes which occur in model evaluation: incorrect measure selection and overfitting.

Incorrect measure selection is very straightforward: you’re using the wrong metric to evaluate the performance of a model. This happens most often with novice machine learning engineers who misclassify a problem, or who don’t have any statistical training as part of their background. Many folks who find their way into machine learning from a software and coding background lack the formal training in statistical computation. For example, which is better, MSE or R^2? The answer depends on the kind of regression problem we’re trying to solve. MSE is good for detecting unusual, unexpected values. R^2 is scale-free, which helps us tune and compare models.

The antidote to incorrect measure selection is formal statistical training, which companies and individuals can easily obtain through online courses.

The second major problem is overfitting. This is also very straightforward: it’s easy to make a machine learning model that performs perfectly on historical data. Hindsight is 20/20, after all. The difficulty is in predicting the future, at least when it comes to supervised learning problems. An overfitted model is one that predicts the past perfectly, but then has horrible performance on new data it receives. This is because the past isn’t the future —we need to build models that can accommodate future uncertainty.

The antidote to overfitting is process-related; in our model evaluation phase, it’s not enough to simply look at model performance. We should be splitting our training data sets into at least 2, if not 3 or more, partitions. The general best practice is to split our data into 60% / 20% / 20% portions. We train our models on the first 60% – the training data. We then use the next 20% as our test data, simulating new data coming into the model, including potential new anomalies. This 80% of data creates our final, production model. We then use the last 20% as validation data, to prove or disprove the final model (without training it more) in a simulated production environment. If the model functions well with all 3 datasets, then it may deal well with future data.

Next: What Can Go Wrong in Modeling?

Model selection and evaluation can be further improved by what IBM calls “AI for AI," using automated processes to speed up selection and evaluation. Software such as AutoML and IBM Watson AutoAI can perform model selection at significantly faster speeds than human machine learning engineers and evaluate dozens, if not hundreds, of models all at once for performance.

We’ll next turn our eyes towards what things will most likely go wrong in the deployment portion of the lifecycle. Stay tuned!

Christopher S. Penn

Christopher S. Penn is cofounder and Chief Data Scientist at Trust Insights.

.jpg)