Editor’s Note: This post is republished with permission from Trust Insights, a company that helps marketers solve/achieve issues with collecting data and measuring their digital marketing efforts.

Click the corresponding links to read Part 1, Part 3, Part 4, Part 5, and the Post-Mortem of this series.

Introduction

The recurring perception that artificial intelligence, AI, is somehow magical and can create something from nothing leads many projects astray.

That’s part of the reason that the 2019 Price Waterhouse CEO Survey shows fewer than half of US companies are embarking on strategic AI initiatives—the risk of failure is substantial. In this series, we’re examining the most common ways AI projects will fail for companies in the beginning of your AI journey.

Be on the lookout for these failures—and ways to remediate or prevent them—in your own AI initiatives.

Part 2: Strategic Failures

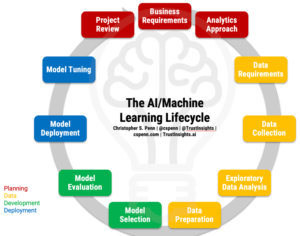

Before we can discuss failures, let’s refresh our memories of what the AI project lifecycle is to understand what should be happening. Grab the full-page PDF of the lifecycle from our Instant Insight on the topic and follow along.

Before we can discuss failures, let’s refresh our memories of what the AI project lifecycle is to understand what should be happening. Grab the full-page PDF of the lifecycle from our Instant Insight on the topic and follow along.

Business Requirements Failures

The most common failure in AI projects, without question, is a failure of business requirements. More often than not, business stakeholders ask for AI not because it solves a problem well-suited to the capabilities of AI, but simply because they want to be able to say, “Our product/service/company uses AI!”. Technology for technology’s sake is a nearly-guaranteed path to disaster.

When we consider the kinds of problems AI can solve, how many current AI projects meet those business requirements? IBM defines six primary use cases for AI:

- Accelerate research and discovery

- Enrich your interactions

- Anticipate and preempt disruptions

- Recommend with confidence

- Scale expertise and learning

- Detect liabilities and mitigate risk

We simplify these into three even broader buckets:

- Save time (reduce inefficiencies)

- Save money (put talent to work on harder problems)

- Make money (faster/better results)

The question we must ask is: Is AI the correct set of technologies to apply to the problem at hand?

Requirements gathering doesn’t necessarily need to be a massive undertaking; for small projects and proofs of concept, very often you can simply document based on these kinds of questions:

- Who is the end user of the project?

- What is the goal of the project? What are the goals of the end users?

- Why is the business undertaking the project?

- Where will the project occur? In AI, this can be a combination of private, on-premise, and cloud systems.

- When do you need to show business results/impact?

Where AI projects often fail is the complete lack of business requirements gathering. How are you gathering requirements? What’s your process for other kinds of projects? Generally speaking, AI is simply software, so if your organization has become fluent at requirements gathering for more traditional software projects, there’s very little extra learning curve for AI.

Analytic Approach Failures

In order to deploy AI and machine learning effectively, you need to understand the kind of problem you’re solving at a slightly more technical level. Broadly, machine learning projects fall into two kinds of problems and two kinds of data.

The two kinds of problems we solve for in machine learning are:

- Classification: We need to bring order to chaos, to tag, sort, and categorize. This type of machine learning is typically called unsupervised learning.

- Prediction: We need to understand what’s causing something to happen and build a model that predicts what is likely to happen. This type of machine learning is typically called supervised learning.

The two kinds of data we solve with in machine learning are:

- Continuous data: numerical data, data that can be expressed as numbers.

- Categorical data: anything that isn’t a number.

Where AI projects go wrong in this stage of the lifecycle is the misunderstanding of what kind of problem you’re tackling. You may think you have a problem that is non-numeric in nature (such as analyzing written content), but it may actually be a numeric problem once you’ve extracted and cleaned the data. Problems such as sentiment analysis and prediction fit in this category; you’re often transforming categorical data into continuous data, then solving a continuous data problem.

Another classical error at this point is assuming a problem is one kind of machine learning when it may be a multi-step, ensemble approach. Again, returning to the sentiment analysis example, suppose we need to turn a pile of tweets into a prediction of what kind of tweets earn the most engagement. We think we’re solving for a prediction, and that may be the last step in the problem, but before we can solve for what makes a tweet engaging, we have to solve for turning text into numbers. That’s a classification problem.

Most business applications of machine learning are ensemble problems, sometimes with many, many stages and iterations of problem solving methods. Only through time and experience will you learn how to assess the different kinds of techniques, stacked together, that you’ll need to build a solution to the problem you’re tackling.

Next: What Can Go Wrong in Data?

Now that we’ve established the most common mistakes in the business strategy portion, we’ll next turn our eyes towards what things will most likely go wrong in the data portion of the lifecycle. Stay tuned!

Christopher S. Penn

Christopher S. Penn is cofounder and Chief Data Scientist at Trust Insights.

.jpg)