This content is republished with permission from Pandata, a Marketing AI Institute partner.

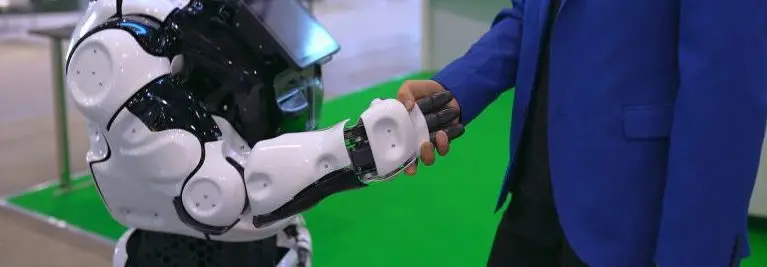

Here at Pandata, we talk a lot about human-centered AI, but what does that mean in application?

It can mean different things depending on the purpose of the solution being created.

Human-centered AI can mean creating an ethically designed algorithm that is trained to benefit the most individuals while minimizing risk and hazards.

It can mean creating a tool that frees up employees for more complex, creative, and fulfilling tasks.

It can also mean augmenting what people are capable of doing or knowing.

It is through this augmentation of human capabilities and knowledge that we see some of the most exciting gains in how we use AI.

We have been hearing about the amazing progress that AI has had in medical diagnosis, particularly with skin cancer.

AI has been able to accurately identify dangerous lesions at higher rates than human doctors.

One set of doctors was not satisfied with knowing that AI was better: they wanted to know why it was better. So, they decided to reverse engineer how the AI came to its decisions. They learned that by looking at the boundary of skin lesions they could make a better diagnosis. What they learned from the AI made them better doctors.

This process has many business applications as well.

For instance, say you have a model that forecasts sales better than you were able to with human minds alone. Wouldn’t it be wonderful to understand what business processes contribute to the ebbs and flows of the sales cycle so that you could take action?

That was the insight sought by one of Pandata's clients. We took the results of a complex forecasting model and analyzed the inputs to understand which business processes correlated with increases or decreases in sales. Armed with this knowledge, the client was able to enact interventions that can potentially impact revenue.

Dissecting the black box that is AI allows us to gain insights into how it is determining the decisions it calculates. The benefits of this are twofold.

First, as our dermatologist friends learned, we can gain insights about relationships in our data that help us make better decisions. Second, understanding how the AI calculates its decisions can help us rectify and untangle thorny ethical considerations.

For example, suppose you have an AI that weights geography, represented by zip code, heavily in its decisions.

Because zip code correlates with racial and ethnic groups, you have an AI that unintentionally weights race and ethnicity heavily in its decisions.

However, you may have very good reasons to want to consider geography as a feature in your model. This was a dilemma faced by another one of our clients.

We used this knowledge to mitigate the way we incorporated geography into our AI to produce more equitable results by changing how we looked at geography. We then tested the results to see if there was racial or ethnic bias in model predictions and were able to determine that bias was not present while producing a highly effective model.

By understanding how your AI uses the information to produce results, you can get ahead of these issues before deploying and before they become public relation nightmares.

Businesses that understand how their models make decisions are less likely to be blindsided by the unintended consequences of AI.

They are in a better position to use AI to increase revenue, benefiting from the full range of insight available, while protecting themselves and society.

Demystifying the black box can allow us to make better decisions while maintaining our brand’s full potential. Human-centered AI is about using AI to benefit humans first and part of that is understanding why decisions are made.

Julie Novic

Julie Novic is a Data Scientist at Pandata.